GLIDE by OpenAI

Text-to-image - Photorealistic Image Generation

About GLIDE by OpenAI

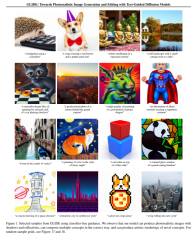

OpenAI recently launched GLIDE (Guided Language-to-Image Diffusion for Generation and Editing) – an AI-based model that enables users to generate photorealistic images from natural language prompts. This model has comparable performance to DALL-E but uses a fraction of the resources, as it is powered by only 3.5 billion parameters while DALL-E uses 12 billion.

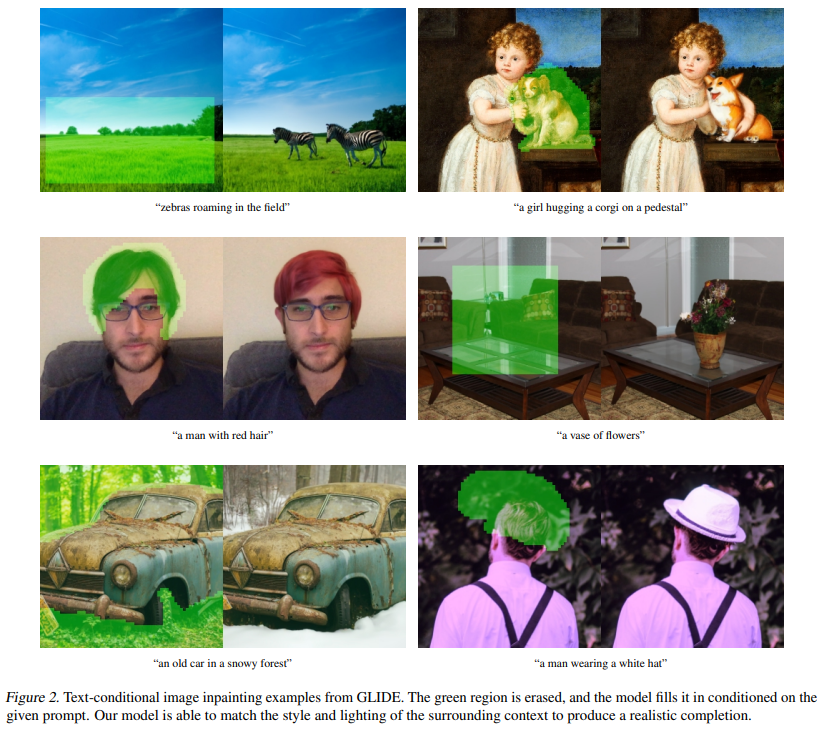

With GLIDE, users can easily and quickly create visuals from text inputs, making it easier to refine and edit images. It can also be used to alter existing images with natural language commands, such as adding objects, shadows, reflections, and performing image inpainting. Additionally, it has the ability to convert simple line drawings into realistic photos, and can even do zero-sample production and repair jobs for complex circumstances.

Humans prefer the images generated by GLIDE compared to DALL-E, even though the former uses fewer parameters. Moreover, it has a shorter sampling delay and does not need CLIP reordering.

GLIDE by OpenAI screenshots

EA Chat GPT-3

EA Chat GPT-3