Gopher by DeepMind

280 Billion Parameters Language model

About Gopher by DeepMind

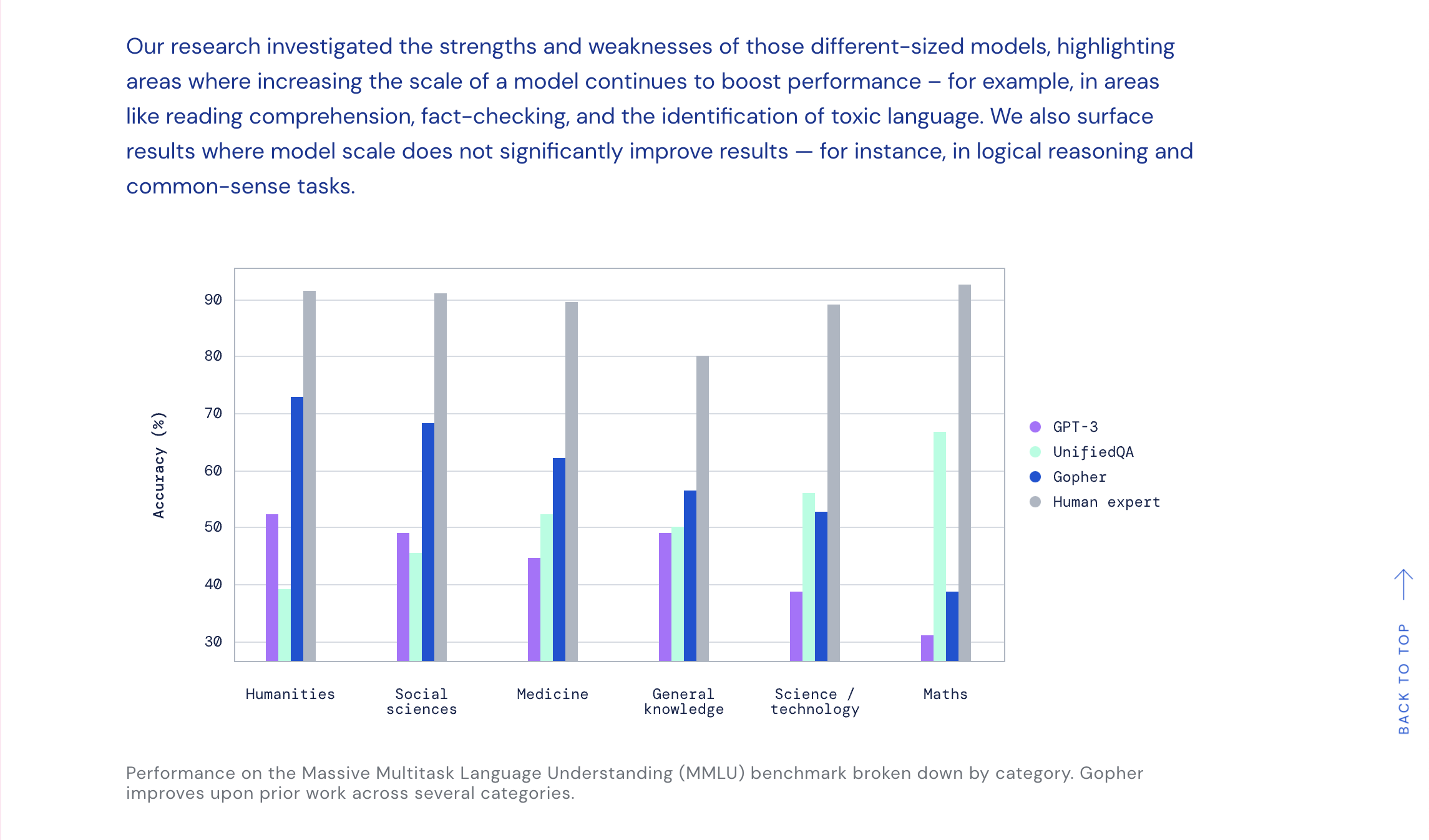

DeepMind has created a language model called Gopher which is capable of beating existing ultra-large language models on many tasks. Gopher is smaller than some ultra-large language software, with only 280 billion parameters. Despite its smaller size, DeepMind claims that its language model is 25 times more effective than models with significantly more parameters. Additionally, the Retro model, which is only 7 billion parameters, is able to match the performance of OpenAI’s GPT-3. The advantage of Gopher is that it is easier to detect bias or misinformation due to the fact that researchers can see which training text was used to produce the output.

Source: https://fortune.com/2021/12/08/deepmind-gopher-nlp-ultra-large-language-model-beats-gpt-3/

Gopher by DeepMind screenshots

EA Chat GPT-3

EA Chat GPT-3